- Blog

- Pantum p2502w install driver takes too long

- Supreme commander 2 torrent repack

- Linux remove drm from epub

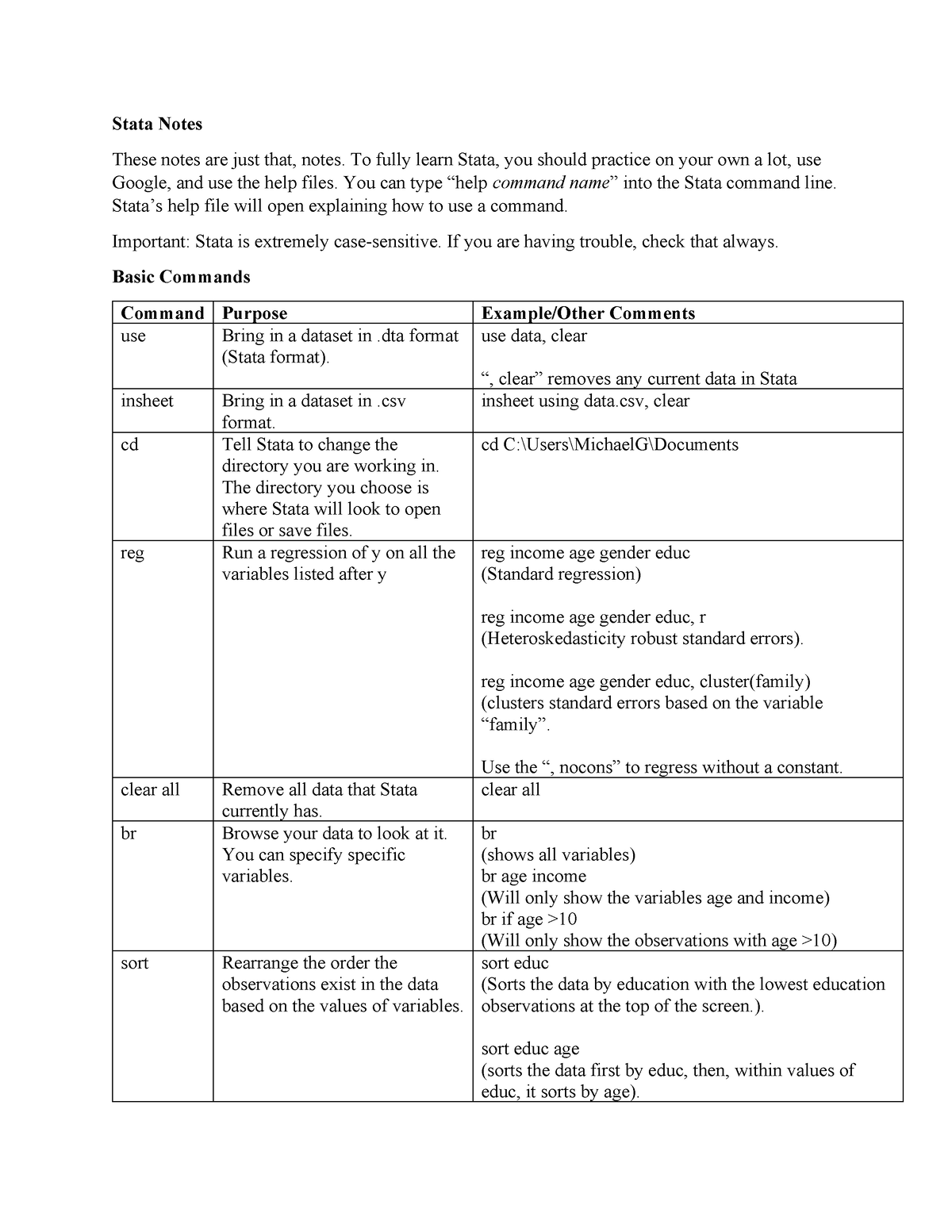

- Cluster standard errors stata

- Download pokemon world 3d

- Kanye west my beautiful dark twisted fantasy

- Humble bundle starbound free

- Starcraft remastered crack status

- Adobe premiere pro cc 2014 keyboard shortcuts download

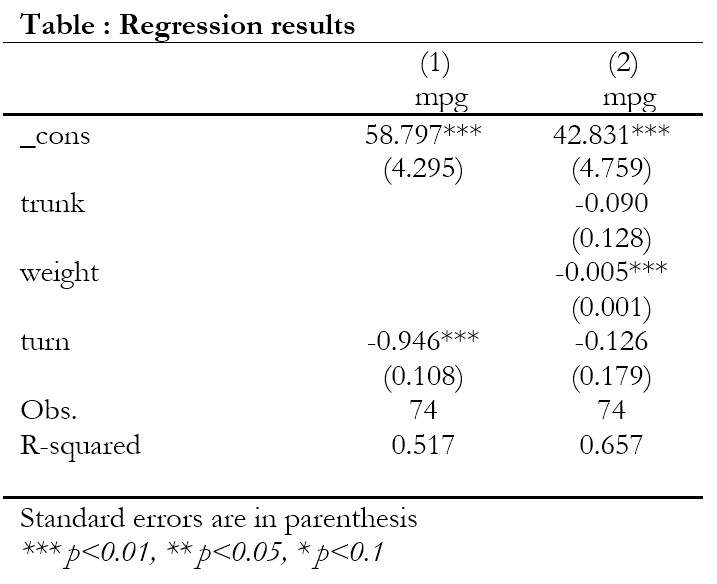

Provided that the model is correctly specified, they are consistent and it's ok to use them but they don't guard against any misspecification in the model.

codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Īnd just for the record: In the binary response case, these "robust" standard errors are not robust against anything. So to obtain the same results as in Stata you can do do: sandwich1 |z|)

At least not to the best of my knowledge. And except for a few special cases (e.g., OLS linear regression) there is no argument for 1/(n - k) or 1/(n - 1) to work "correctly" in finite samples (e.g., unbiasedness). Of course, asymptotically these do not differ at all. Alternatively, sandwich(., adjust = TRUE) can be used which divides by 1/(n - k) where k is the number of regressors. In the sandwich(.) function no finite-sample adjustment is done at all by default, i.e., the sandwich is divided by 1/n where n is the number of observations. The only difference is how the finite-sample adjustment is done. The default so-called "robust" standard errors in Stata correspond to what sandwich() from the package of the same name computes.